I was stuck at 1201 rapid for five straight months.

Played more. Didn't help. Watched every GothamChess, Agadmator, and Hanging Pawns explainer my feed served me. Didn't help. Read two books. Didn't help either. Whatever I was doing, my rating didn't care.

So I decided to stop trusting anyone's opinion, including my own, and run the experiment. One month each, six apps total. Same playing volume across the board. 15 to 20 rapid games a week. Same training time, 20 to 30 minutes a day on whatever app was up that month. Single variable.

Nobody paid me. I paid for everything, including one month of a product I ended up uninstalling inside three weeks.

I ended at 1551. Most of the rating came from one app. Several of the others pulled weight in ways that weren't visible on the rating chart until months later. I'll try to be fair about it.

The baseline: stuck at 1200

Before the experiment my habits looked like most plateau players'. Roughly twenty rapid games a week on Chess.com. I'd review the engine graph for thirty seconds after a loss and call it analysis. Random daily puzzles when I had a minute. Google Doc notes I stopped opening after week three.

The diagnosis, only visible in hindsight, was that nothing had a feedback loop. I was logging reps, not learning from them. Five months of that produced five months of the same rating.

Experiment setup: one month per app. Keep rapid volume constant. Twenty to thirty minutes a day on the tool of the month. Measure rating at the start and end of each month. No cherry-picking. Whatever happened, I report it.

Chess.com Puzzles (Month 1 — gain: +40 ELO)

What I did: Puzzle Rush most mornings, plus twenty minutes of rated tactical puzzles in their calibrated mode. I liked this one. It felt productive.

What worked was volume. The sheer number of reps. The UI is polished, the puzzles are satisfying, and once you're in a flow you can knock out a hundred in a sitting. My tactical reaction speed sharpened. I saw forks and pins faster by week four.

What didn't work is the same thing everyone says, but it's worth repeating in specific terms. The puzzles aren't about my blind spots. They're drawn from a common-position distribution that reflects what chess.com's algorithm thinks all 1200s miss. The positions I actually blundered in were specific to me. I'd solve a back-rank puzzle correctly in the morning and hang my bishop in an overloaded-defender position that afternoon, because my blind spot wasn't back-rank, it was overloaded defenders, and I wasn't being served those.

Good for grind training when you're tired and want something that feels productive. Not the thing that moves the needle.

Lichess Training (Month 2 — gain: +25 ELO)

Free, no ads, clean interface, enormous puzzle pool. This section is short because there isn't much to say about it.

What worked is obvious. What didn't: even less personalization than Chess.com. The puzzle rating matches you to positions, but the positions themselves are generic. Puzzle Storm is a dopamine game, not training. The Lichess analysis tool is powerful, in some ways more than Chess.com's, but it's an engine interface, not a training system. No one's telling you what to do with the output.

It's worth having an account. Not worth paying attention to as your primary training tool.

Aimchess (Month 3 — gain: +32 ELO)

I expected to like this one more than I did.

What Aimchess actually does is audit your games in aggregate. It told me I was burning 60% of my clock on the first fifteen moves, which matched my sense of always running short in endings. It also surfaced that my win rate dropped twenty-two percent when I played White above two minutes on the clock versus four. New information, useful, made me change my opening prep.

Two things held it back.

The interface feels like it hasn't been redesigned since 2019. The iOS experience is a browser wrapper, which means scroll behavior is wrong, the buttons are the wrong size for thumbs, and I kept zooming accidentally. For a product about chess on your phone, this matters.

And the dashboards throw forty metrics at you without opinionating on which three you should care about. I'd open Aimchess, look at a grid of graphs, and close the tab. "Insights" aren't insights if you can't tell which ones to act on.

Good for data-minded improvers who don't mind doing the synthesis themselves. Not plug-and-play.

Chessable (Month 4 — gain: +60 ELO)

Different category of tool. Chessable isn't a training app, it's an opening memorization system. I bought the Short & Sweet London course and drilled it every morning with their spaced repetition engine.

The SRS is best-in-class. I'm not exaggerating. Moves I drilled for two weeks became automatic. By end of month I was hitting playable middlegames in three minutes instead of eight. The opening confusion that used to bleed five minutes per game went away.

The +60 in month 4 is almost entirely the result of spending less time fumbling the first fifteen moves. I wasn't a better chess player. I was a chess player who had his openings cached.

What it doesn't do: teach you to think. If my opponent deviated on move five, the Chessable recall evaporated because I hadn't built understanding, just memory. I was still blundering the same middlegame patterns.

This is the tool I'd keep alongside any training tool. Not one or the other.

Cassandra Chess (Month 5 — gain: -5 ELO)

The only app I uninstalled.

The pitch was compelling. Clean UI, "learn from your games," more focused than Chess.com. I had high hopes going in because I'd read good things.

In practice the recommendations were curriculum-driven, not game-driven. I'd be told to work on "double attacks" while my last three losses were all time-pressure blunders in equal endgames. The lessons were fine in isolation, they just weren't about me. By week two I was skipping days. By week three I'd stopped opening it. My rating drifted slightly during the month because I was distracted, not focused. I'm blaming my own lack of discipline for most of the five ELO loss, but I also don't think the app was doing anything to counteract the drift.

I want to give them credit for being the only one in this list where I felt like I'd wasted the month. Useful to know, at least.

Chessy (Month 6 — gain: +198 ELO)

I'm going to try to be honest about this one, because it's the one that worked, and that's exactly the kind of review people skim past.

Week one was rough. The puzzle difficulty was wrong. The app's calibration tried to figure out my level from my games and overshot. I was getting positions that were too hard, and I almost canceled in the first four days. By day five it had adjusted. By end of week one it was serving me positions that matched the blunders I'd actually been making. I don't know what changed technically. I assume I played enough games that its model sharpened.

What worked, as a list because there's more of it than fits in prose:

- The puzzles came from my losses. Specific positions where I'd personally missed something. In week one it flagged a knight fork pattern I'd missed in four separate games. I hadn't noticed the repetition until Chessy showed me. It drilled the pattern for three days. I stopped hanging that fork. Watching a specific pattern recurrence drop in my own games, with nothing else changing, was the moment I realized the product worked.

- The weekly coach report blurred the lines between software and a critical friend. Top of the first one: "Your Italian Game is bleeding 42 ELO this month. Stop playing it." I liked the Italian. I switched to the London for two weeks anyway and gained forty ELO. The report isn't friendly. It's correct.

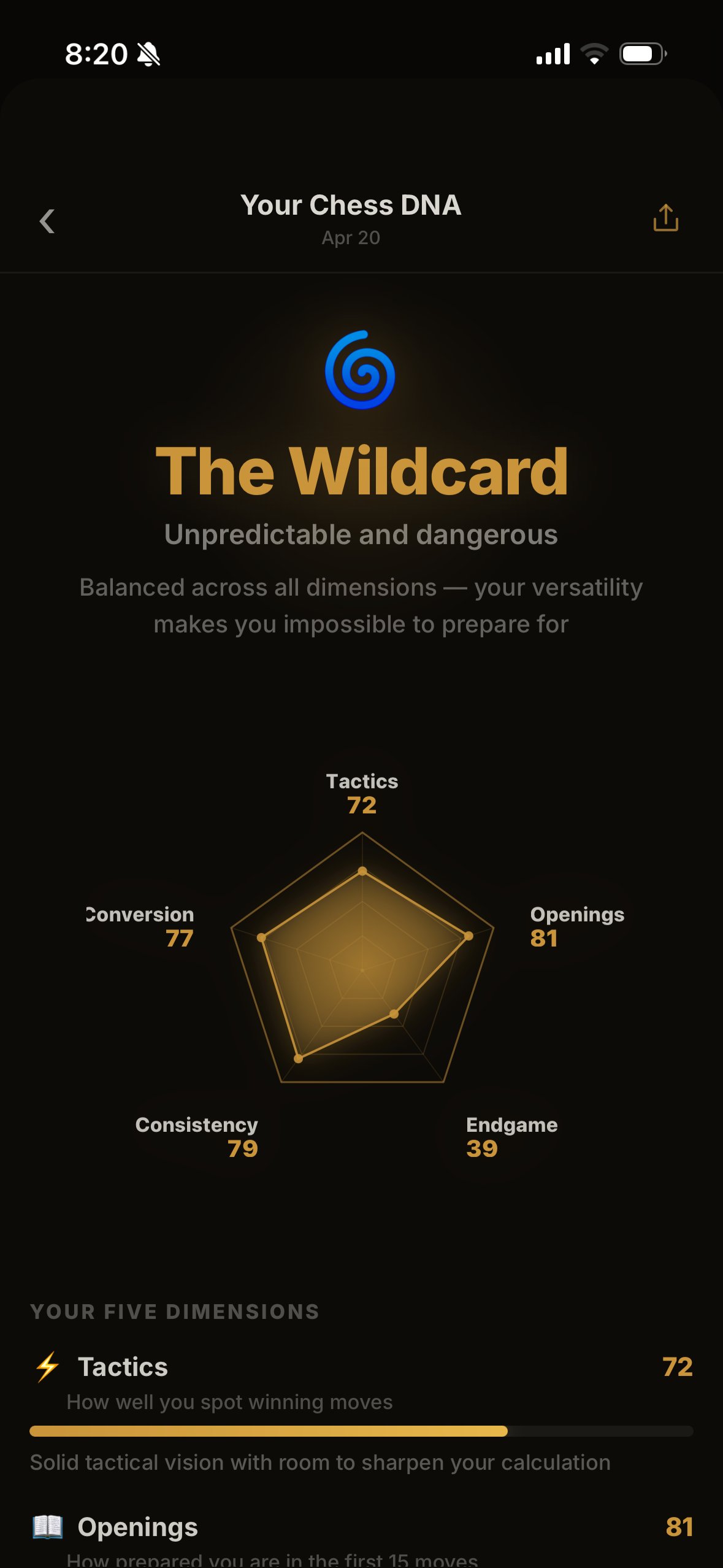

- Rating DNA was gimmicky to me at first. A spider chart? Come on. It took about two weeks before I cared about it. Once I did, the five dimensions (tactics, openings, endgame, consistency, conversion) gave me the clearest diagnostic I've had anywhere. Mine came out 61 / 54 / 39 / 68 / 70. That 39 in endgame is the weakness I couldn't clearly see. I knew I lost a lot of close endings, I didn't know it was that asymmetric with the rest of my game. Three focused weeks there brought endgame to 52.

- The training shifted as I improved. Patterns I stopped missing faded out of my puzzle queue. New ones surfaced. It felt alive, not canned.

Things I don't like:

iOS only. A real limitation. I know several players stuck on Android who can't touch this, and I'm not going to soften that.

Newer product than Chessable, so the opening-content library is smaller. I still used Chessable alongside it for the London, and I'd expect to keep doing that.

The three-day trial is short. For a tool that rewards a weekly rhythm, you're committing to a subscription before you've seen the weekly coach report do its thing. A seven-day trial would be more honest.

Requires a Chess.com or Lichess account to analyze. For me, fine. For a pure over-the-board tournament player, it'd be a non-starter — not a pro criticism, just a real one.

What the other apps actually gave me

I said earlier that most of the rating came from one app. That's still true, but it undersells what happened across the six months.

Chess.com Puzzles made my tactical reactions faster. I notice forks and pins earlier now regardless of which app I'm using. That speed is durable.

Chessable made my openings automatic in a way I couldn't have faked with pure training. Four months after the experiment ended, the London is still muscle memory.

Aimchess gave me the clock-management data point that changed my opening prep philosophy. One insight, but it stuck.

Lichess is a free puzzle platform I still use when I have ten minutes.

Even Cassandra taught me something: I can tell much faster now when a tool isn't working, and I stop. Two weeks, not two months.

Actually, wait — that's not fair to Cassandra. It's not that they built a bad app. It's that the "learn from your games" pitch maps to multiple possible implementations, and the one I wanted (game-specific recommendations) wasn't the one they shipped (games-inspired curriculum). Different products. I expected A and got B.

Who each app is actually for

Pure beginners under 800 ELO — Lichess, free. Stop reading reviews, go play.

Committed beginners through intermediate (800 to 1400) — on iOS, Chessy is hard to beat. On Android, Chess.com Puzzles plus Chessable for a specific opening is the current best stack.

Intermediate to advanced (1400 to 1900) — Chessy as the core, Chessable for opening depth, occasional Aimchess month for a data audit.

Advanced (1900+) — honestly, a human coach outperforms any app at this level. Apps are maintenance, not improvement.

If your goal is specifically opening mastery, Chessable is unmatched regardless of level.

If your goal is volume grinding when you're tired, Chess.com.

If your goal is improvement with the least friction and you're on iOS, you're reading the right review.

Honest limitations of my experiment

One month per app isn't always enough. Chessable benefits compound over six to twelve months of daily use. I gave it four weeks. Longer tests would show bigger gains.

My rating range is 1200 to 1550. Results might differ at higher levels. The "personalized training" edge probably narrows when your blind spots are already known to you.

I spent more time on Chessy in month six than I gave any other app, because it was working and I wanted to ride momentum. Some of the +198 is that extra engagement, not the app itself. Counterpoint: Chessy made me want to keep showing up. The others didn't. That counts.

Android users can't replicate my top result. I've said it three times in this review because it's the single real limitation of the thing that worked.

The final numbers

- Starting rapid: 1201

- After Month 1 (Chess.com Puzzles): 1241

- After Month 2 (Lichess): 1266

- After Month 3 (Aimchess): 1298

- After Month 4 (Chessable): 1358

- After Month 5 (Cassandra): 1353 (-5, not a typo)

- After Month 6 (Chessy): 1551

Total gain over six months: +350 ELO. Best month by a factor of three: Chessy. Worst month: Cassandra, where I actively lost ground.

Bottom line

For improvement-focused iOS players: Chessy did more for my rating in one month than the other five apps combined managed in five. Not close.

For Android players who can't use Chessy: Chess.com Puzzles for daily tactics plus Chessable for opening memorization is the best stack I've found, but you'll need to do the critical-moment analysis yourself with pen and paper. That's not an app problem. It's a discipline problem.

For everyone stuck on a plateau: whatever tool you pick, the shift is the same. Stop grinding random reps. Start training on the specific patterns you're actually missing. The app is a shortcut to that. It isn't the point.